Don't Miss the Touch Train!

Add smartphone-like user interface capabilities to your products without adding costs and complexity.

Using Touchscreen Display in Embedded Applications

When you add connectivity and intelligence to an embedded device, you’re going to need a User Interface (UI) so that users can configure devices to their needs (e.g., connect to Wi-Fi®, monitor device status and more). Consumers are very familiar with touchscreen UIs thanks to smartphones, but the best practices for smartphone touchscreen development don’t necessarily apply to embedded devices.

As they have become common consumer devices, smartphones are now the benchmark for measuring the usability of any other high-tech device equipped with a touchscreen display. Be it a smart thermostat or an infotainment display in a car, consumers will expect touchscreen performance to be as sharp and responsive as what they’ve experienced on their smartphone.

Don’t Follow the Example of Smartphone UIs

So, when designing a touchscreen interface for an embedded application, a developer would naturally look to how smartphones implement their touchscreens in order to create a touchscreen with similar performance capabilities for their embedded design. This can present a challenge to developers, as many of the embedded devices using touchscreens must meet more aggressive price points than the typical smartphone.

Surprisingly, the primary cost driver for implementing a smartphone-like touchpad will be the Operating System (OS). If you select it wisely, it might be available for free. However, you will still need dedicated hardware to run the OS, and that hardware will increase your application’s bill of materials. Setting up the OS and writing the necessary software will also add to your development time and cost.

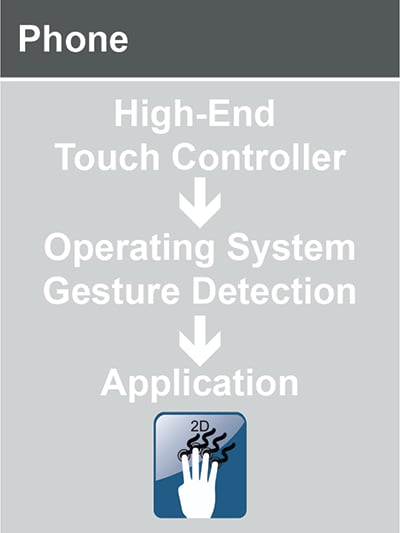

Figure 1

Figure 1 shows a simplified illustration of how gesture detection is implemented on a smartphone. A high-end touch controller is used to acquire multi-touch data as quickly and precisely as possible and the controller sends that data to the OS. The OS analyzes the incoming touch data, detects patterns and maps them to predefined gestures. The identified gesture is then reported to the application in order to perform the user’s desired task. This provides the developer with a high level of confidence that the gesture is recognizable and repeatable for all applications that are available on the smartphone.

What Your Embedded Touch UI Really Needs

If you allow yourself to step back and refocus on the initial task, you may find a better way to move ahead with your design. What does it really need? Here are some questions you should ask:

- How many fingers are involved? Due to the size of the touchpad and its typical usage on an embedded device like a smart thermostat, this will most likely be one finger or two at most while performing convenient gestures such as pinch to zoom.

- How precise or accurate does the interface need to be? If the goal is to detect gestures rather than move a cursor to a specific pixel on a screen, an interface that uses swipe direction and speed might be sufficient.

- How fast does the interface need to be? In most non-smartphone use cases, the interface may only need to recognize an occasional touch command as opposed to the constant stream of touch position data that users create as they navigate between apps on their smartphones.

- How many different applications are you going to run on the device? If it is an embedded device, it will most likely only need to perform one or two specific tasks. A smartphone could potentially need to support dozens or hundreds of applications, depending on what apps the user chooses to install.

With these considerations in mind, it becomes apparent that a smartphone’s touchscreen infrastructure might be an oversized (and overweighed) solution for implementing your embedded application’s touchscreen UI design. Fortunately, we provide a 2D touch library as part of MPLAB® Code Configurator (MCC), a free plugin available in our MPLAB X Integrated Development Environment (IDE).

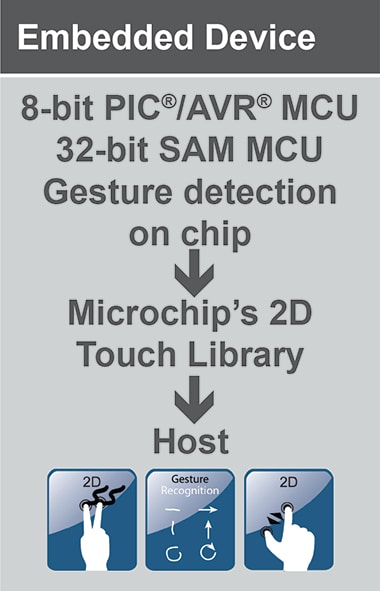

The library runs on our 8- and 32-bit microcontroller (MCU) units that offer built-in touch. The MCU is able to detect gestures and directly report the touch/gesture data to the host. And since the library’s touch/gesture solutions don’t require an OS, there’s no need to invest time and money in developing the hardware and software infrastructure to run one. Figure 2 provides a simplified view of this type of implementation.

Figure 2

You can begin implementing gesture detection in your touchscreen application by just following a few simple steps. You will need to define the parameters of your project; for example, if you need gesture recognition for one or two fingers, you will need to determine how many rows/columns you will need for your sensor and which type of sensing method you would like to use. You can also assign the MCU’s pins according to the specific requirements of your design.

Visit our 2D touch web page to learn how the 2D touch library for 8- and 32-bit MCUs and our development tools provide you with all the resources you need to implement a smartphone-like UI without the complex and costly infrastructure.